In 2026, the “robot boss” is no longer a metaphor. As of January 1, landmark regulations in California and new federal guidelines have granted workers unprecedented rights to audit the algorithms that monitor, score, and discipline them.

The workplace of 2026 is governed by high-resolution surveillance systems—often called “bossware”—that go far beyond tracking keystrokes. These tools now analyze “collaboration density,” sentiment in internal communications, and real-time “productivity scores” to automate management decisions. However, the legal tide has turned. With the full implementation of California’s SB 238 and updated CCPA regulations, January 2026 marks the beginning of “Data Sovereignty” for employees. For the first time, workers have the legal standing to look “under the hood” of the automated systems that determine their professional fate.

The Rise of “Sentiment Scoring” and Collaboration Metrics

In early 2026, bossware has evolved into a sophisticated psychometric tool. Companies now utilize Natural Language Processing (NLP) to monitor Slack, Teams, and email communications for more than just compliance; they are looking for “burnout signals” and “organizational loyalty.”

- Collaboration Density: Algorithms now track how often you interact with high-performing peers, using these graphs to predict your promotion potential.

- Sentiment Analysis: AI agents scan for shifts in your tone that might indicate dissatisfaction or a high “flight risk” (intention to quit), often flagging you to HR before you’ve even updated your resume.

- Gaze and Focus Tracking: For remote roles, 2026 software utilizes laptop cameras to verify “active engagement” during meetings, scoring you on eye contact and attention span.

SB 238 and the “Surveillance Inventory” Mandate

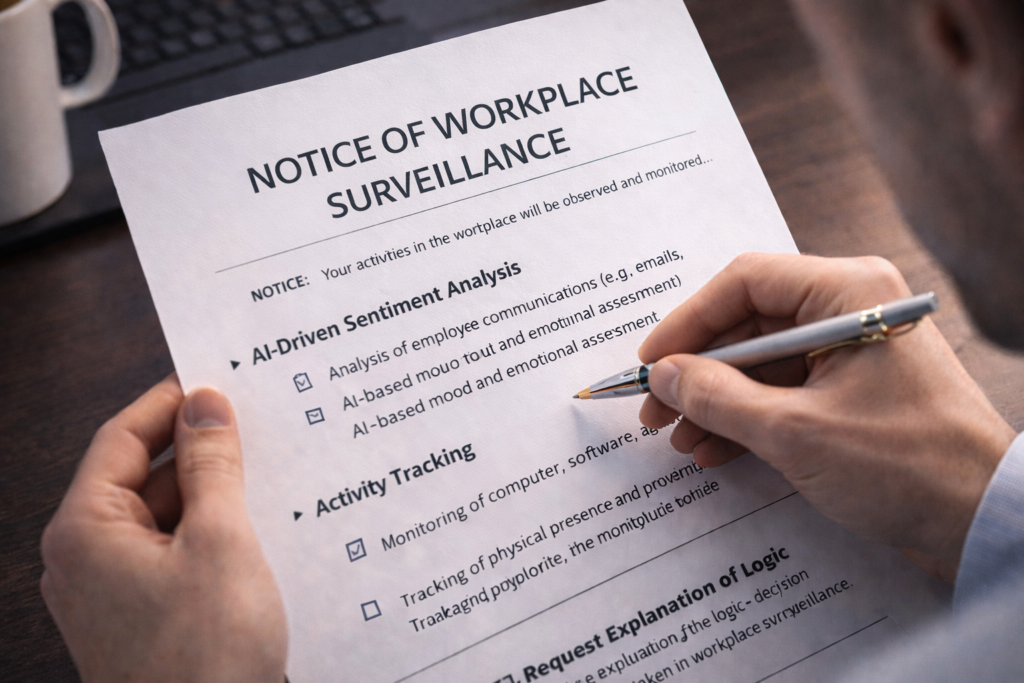

A critical deadline for employers arrives on February 1, 2026. Under California’s SB 238, all employers must provide an annual notification to the Department of Industrial Relations (DIR) and their employees detailing every workplace surveillance tool in use.

If your employer used surveillance tools before January 1, 2026, they must provide you with a comprehensive notice by February 1, 2026, explaining the tool’s name, the specific data it collects, and the employment decisions (like pay or discipline) it influences.

This “Surveillance Inventory” allows workers to understand exactly what is being tracked—whether it’s GPS location on company vehicles, browser history, or AI-driven performance ranking.

Data Sovereignty: Your Rights Under the New ADMT Regs

As of January 2026, Automated Decision-Making Technology (ADMT) is subject to strict “Data Sovereignty” rules. If your company uses AI to make “significant decisions”—defined as those impacting your hiring, promotion, termination, or compensation—you now have four fundamental rights:

- The Right to Pre-Use Notice: You must be told before your data is fed into an automated system for a significant decision.

- The Right to Access Logic: You can request a “plain language” explanation of the algorithm’s logic. For example, if an AI denied you a bonus, the company must explain which metrics (e.g., response time vs. error rate) led to that outcome.

- The Right to Correct Data: If the algorithm is making decisions based on incorrect personal data (such as misattributed sick days), you have a legal right to force a correction.

- The Right to Opt-Out: In certain scenarios, you may opt-out of being subject to automated decision-making, though this may require a manual human review of your performance instead.

The Mandatory “Human-in-the-Loop” for Discipline

One of the most powerful protections in 2026 is the legal requirement for Meaningful Human Review. Under the new FEHA (Fair Employment and Housing Act) amendments, an employer cannot fire or discipline an employee based solely on an algorithmic output.

A human manager must independently review the automated findings to ensure they aren’t biased or based on “proxy data” (such as using your zip code or health-related absences to unfairly lower your score). If you are fired in 2026 and the decision was generated by a “black box” algorithm without human intervention, you may have grounds for a procedural violation claim.

Worker Rights Comparison: 2025 vs. 2026

| Feature | 2025 Standard | 2026 Reality |

| Tool Disclosure | Voluntary/Internal | Mandatory (SB 238) by Feb 1st |

| “Robot” Firing | Common in gig/tech | Prohibited without human review |

| Logic Transparency | Proprietary “Black Box” | Right to Access & Explainability |

| Sentiment Analysis | Unregulated | Subject to Privacy Risk Assessments |

| Statute of Limitations | 1–2 years | Expanded to 4–6 years for data claims |

FAQ – Frequently Asked Questions About 2026 Bossware

Can my boss monitor my home office via webcam in 2026?

Technically, yes, if disclosed in the SB 238 notice. However, 2026 privacy laws in many states now prohibit “continuous” video monitoring that captures family members or non-work-related areas of the home without a specific safety justification.

How do I request an “Impact Assessment”?

If you suspect an algorithm is biased, you (or your legal representative) can request the results of the company’s mandatory Bias Audit or Risk Assessment. Companies using high-risk ADMT in 2026 are required to perform these audits annually.

Does this apply to gig workers (Uber, DoorDash)?

Yes. The 2026 regulations specifically target “Platform Work” and independent contractors, ensuring that algorithmic “deactivations” follow the same human-review standards as traditional employee terminations.