By April 2026, the term “Prompt Engineer” has technically transitioned from a high-status job title to a secondary skill listed on a resume. The rapid maturation of Large Language Models (LLMs) has led to the development of self-optimizing “meta-prompters,” rendering the manual crafting of text commands nearly obsolete for high-level technical tasks. In this new landscape, the industry’s focus has shifted toward AI Orchestration, a discipline that requires the integration of multiple specialized agents into a cohesive, goal-oriented system. The modern professional is no longer evaluated on how they talk to an AI, but on how they design the automated architecture that allows AI to function without constant human intervention.

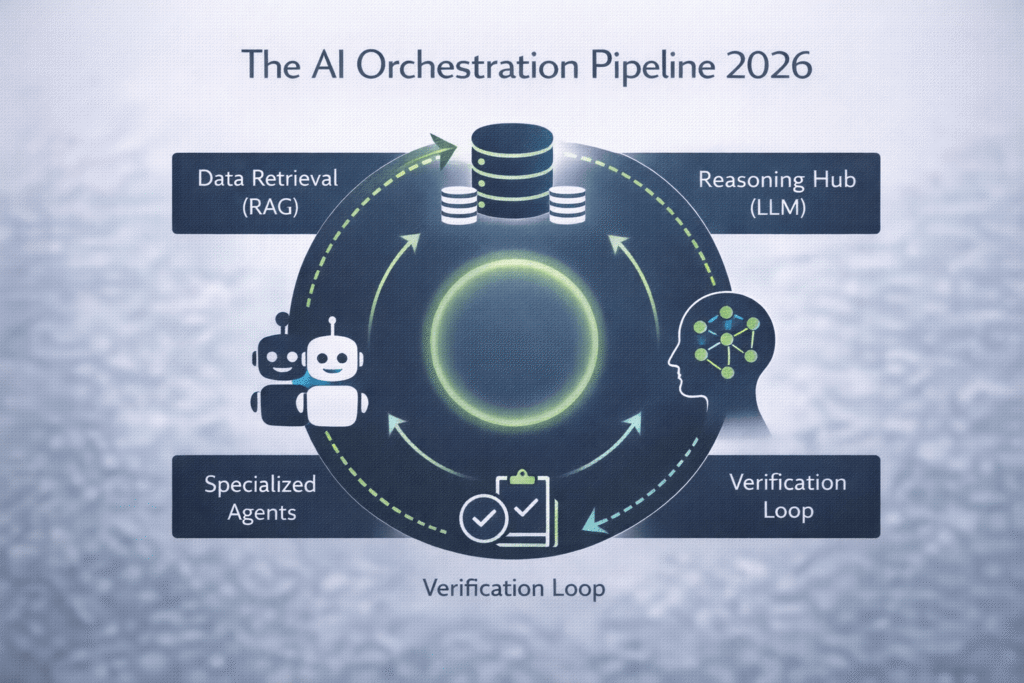

This systemic evolution in 2026 is driven by the realization that isolated chat interfaces are insufficient for enterprise-scale operations. Modern companies require AI “pipelines” where data flows from a retrieval source to a reasoning engine, and finally to a specialized action agent. The AI Orchestrator is the architect of this flow, ensuring that each node in the process is technically sound and cost-efficient. In 2026, being an expert at a single LLM is like knowing a single tool in a factory; an Orchestrator, conversely, is the engineer who designs the entire assembly line.

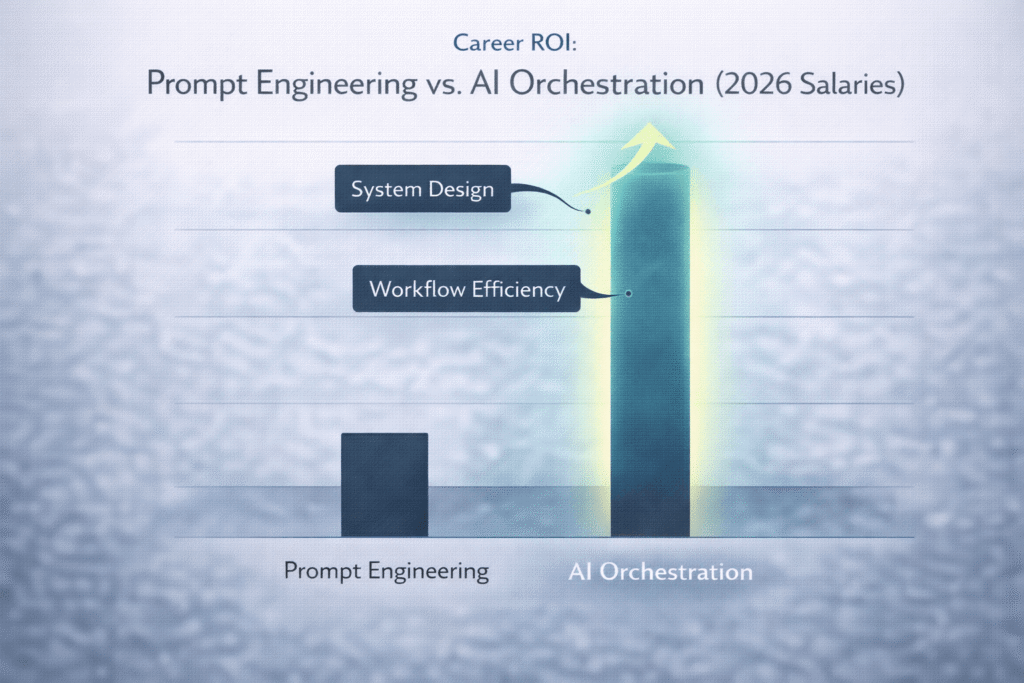

The shift to orchestration has also fundamentally changed the “Entry-to-Career” pipeline. While prompt engineering was accessible to anyone with basic linguistic skills, orchestration requires a deeper understanding of system logic and API interoperability. Professionals who made the transition early are seeing a significant “career premium,” as organizations struggle to manage the “Agentic Sprawl”—the chaos that occurs when too many uncoordinated AI agents are deployed within a single network. In 2026, the orchestrator is the primary line of defense against operational inefficiency and data hallucinations.

The AI Orchestrator’s Stack: Agents, RAG, and Fine-Tuning

To succeed as an AI Orchestrator in 2026, one must master a multi-layered Technical Stack that goes beyond the text box. The modern orchestrator utilizes “agentic frameworks” to create specialized bots that have specific roles, such as researchers, writers, or auditors. This requires a technical understanding of how to maintain “state” and “memory” across multiple interactions—a challenge that basic prompting never addressed. In 2026, the goal is to create a system where the AI knows its objective and has the autonomy to select its own tools to achieve it.

A critical component of this stack is Retrieval-Augmented Generation (RAG) 2.0. By 2026, RAG has evolved from simple document lookup to real-time, multi-modal data integration. Orchestrators must technically design the vector databases and “embedding” strategies that allow an LLM to access a company’s private, up-to-the-minute data without the need for constant, expensive retraining. This architecture ensures that the AI’s output is grounded in “Ground Truth” rather than probabilistic guessing, making it reliable for legal, financial, and medical applications.

AI Orchestration in 2026 is not about talking to machines; it is about building the cognitive nervous system of the modern corporation, where agents collaborate autonomously to solve complex business logic.

In 2026, the orchestrator’s stack is defined by four technical pillars that ensure system reliability:

- Multi-Agent Coordination: Managing the “handoff” protocols between different specialized models.

- Dynamic RAG Pipelines: Integrating live data streams into the AI’s reasoning process.

- Fine-Tuning Governance: Knowing when to tweak a model’s weights versus when to adjust the system’s instructions.

- Observability and Auditing: Implementing “Log-Analysis” agents that monitor other agents for bias or logic errors.

Finally, the 2026 orchestrator must focus on Inference Optimization. Using a high-tier model for a low-tier task is technically a failure in orchestration. The orchestrator uses a “routing” layer to send simple queries to small, efficient models and complex tasks to high-parameter LLMs. This technical balancing act is what allows AI systems to be sustainable and scalable in the 2026 fiscal environment, directly impacting the organization’s bottom line through sophisticated resource management.

The “Systemic Value” of Orchestration in 2026

The systemic value of orchestration lies in its ability to bridge the gap between raw AI power and business outcomes. In 2026, an orchestrator doesn’t just deliver a “result”; they deliver a reproducible process. By documenting the “logic gates” and “validation loops” within an AI workflow, they create a persistent asset that the company can scale across departments. This move from “transient prompts” to “persistent workflows” is what defines the 2026 career path as a high-value engineering role rather than a creative writing exercise.

Furthermore, orchestrators are the masters of “Context Window Management.” In 2026, despite models having massive context capacities, the cost and latency associated with large inputs require technical precision. The orchestrator designs strategies to compress and “distill” the necessary information, ensuring the AI only processes the most relevant data. This technical efficiency is a key performance indicator (KPI) for the role, as it ensures that the AI’s “thought process” remains focused and high-speed.

The orchestrator also handles the technical integration of Human-in-the-Loop (HITL) checkpoints. They don’t just automate the entire process; they technically identify the “high-risk nodes” where a human must intervene for ethical or quality reasons. This design-led approach ensures that the AI remains a tool for human augmentation rather than a black-box replacement. In 2026, the orchestrator’s value is measured by the safety and reliability of the automation they build, not just the speed of the output.

The “Human-in-the-Loop” Governor: Ethical and Technical Oversight

In 2026, the “Governor” is a technical layer within the orchestration stack that prevents “Agentic Drift.” As agents interact, they can sometimes create feedback loops that lead to unexpected behaviors. The orchestrator must technically program the Guardrail Agents that oversee these interactions, acting as a real-time regulatory body. This ensures that the system remains within the legal and ethical boundaries established by the corporation and the 2026 federal AI guidelines.

This oversight also extends to Technical Verification. The orchestrator designs “Cross-Check” loops where a secondary agent verifies the mathematical or factual output of the primary agent. By building these “adversarial” systems, the orchestrator minimizes the risk of hallucinations, making AI viable for high-stakes mission-critical infrastructure. In 2026, a “perfect” prompt is less valuable than a “self-correcting” system, and building that correction is the orchestrator’s primary technical responsibility.

Hiring Data: The ROI of Orchestration vs. Basic LLM Use

The 2026 labor market has a clear preference for the Orchestrator over the Prompt Engineer. Hiring data shows that roles requiring “System Design” and “Workflow Integration” offer salaries 40% higher than those focusing purely on content generation. This ROI is driven by the fact that an orchestrator can technically replace entire manual departments with a single, well-designed agentic pipeline, whereas a prompt engineer can only increase the efficiency of a single individual.

To understand the shift, we must look at how the technical requirements for “AI-Ready” roles have evolved in the last two years:

| Metric | Prompt Engineering (2024) | AI Orchestration (2026) |

| Core Competency | Linguistic Nuance & “Magic Words” | System Architecture & Data Flow |

| Output Type | Static Text/Images | Autonomous, Multi-Step Workflows |

| Tool Reliance | Single LLM Interface (Chat) | Integrated API Ecosystems & RAG |

| Value to Firm | Individual Productivity Boost | Operational Scalability (Team Replacement) |

| Technical Barrier | Low (Natural Language) | Moderate to High (Python/APIs/Logic) |

As firms in 2026 move toward “Lean Operations,” the orchestrator is seen as the ultimate operational leverage. By automating complex decision-making processes that previously required teams of middle managers, the orchestrator provides a direct, measurable impact on the company’s profit margins. This technical ROI is what makes the role one of the most stable and future-proof career paths in the 2026 economy, as the demand for “intelligent automation” continues to outstrip the supply of qualified talent.

Future-Proofing: How to Build an Orchestration Portfolio

Building a portfolio for 2026 requires a shift from “showing results” to “showing the plumbing.” A hiring manager no longer wants to see a gallery of AI-generated art; they want to see a workflow diagram of how a system handles a complex, multi-stage request. Your portfolio should include “Technical Artifacts” such as API documentation for your agents, logic maps showing how you handle data mismatches, and evidence of how you optimized inference costs for a specific business case.

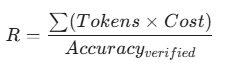

In 2026, your “Proof-of-Work” must include Performance Metrics. You should be able to demonstrate how your orchestration technically reduced “Time-to-Task” or improved accuracy compared to a baseline prompt. Use LaTeX to express your efficiency models, such as the “Inference Cost-to-Accuracy Ratio”:

By documenting your career in these technical terms, you prove that you are an AI-Strategist capable of delivering industrial-grade solutions. This level of technical evidence is what will separate the leaders from the laggards as the “Skills-First” revolution of 2026 continues to reshape the professional world.

FAQ: Mastering AI Workflows

Do I need to be a software developer to become an AI Orchestrator in 2026?

Not necessarily, but you need “Code Literacy.” While you may not be writing complex algorithms from scratch, you must technically understand how APIs function, how to read JSON data structures, and how to use Python or “Low-Code” orchestration platforms to link different models together. In 2026, the “Non-Technical Orchestrator” is more of a system designer who understands the logic of the code even if the AI writes the final syntax.

How is AI Orchestration different from traditional “Automation”?

Traditional automation is “If-This-Then-That” logic, which is rigid and breaks easily. AI Orchestration in 2026 is “Goal-Oriented” and “Probabilistic.” Instead of a fixed path, you give the system a goal, and the AI agents technically reason their way through obstacles, choosing different tools or paths based on the real-time data they encounter. It is a more flexible, resilient, and “intelligent” form of automation.

What is the “Cost-to-Reasoning” ratio I should monitor in 2026?

This is the technical measure of how much you are spending on GPU/API credits to achieve a specific logic outcome. In 2026, orchestrators use “Model Routing” to minimize this ratio. You don’t use a high-cost frontier model to summarize an email; you use a small, distilled model. Mastering this “financial orchestration” is a key part of the 2026 career path, as it makes you an asset to the CFO as much as the CTO.

How do I protect company data when using multi-agent systems?

In 2026, the technical standard is the “Privacy-First Agentic Layer.” Orchestrators use “Anonymization Agents” that strip PII (Personally Identifiable Information) before data is sent to a third-party LLM. Additionally, many 2026 workflows rely on “Local LLMs” for sensitive data processing, only using the cloud for non-critical reasoning tasks. Building these security “checkpoints” into your workflow is a mandatory part of orchestration compliance.

Are there careers for “Humanities Majors” in AI Orchestration?

Yes. The 2026 market has a massive demand for “Agent Philosophers” and “Linguistic Architects.” Orchestration requires a deep understanding of human nuance, ethics, and logic to design the “Guardrails” and “Goal Definitions” that agents follow. If you can technically map out human reasoning and translate it into a structured system for an AI to follow, you are a valuable asset in the orchestration ecosystem.

How does “Agentic Hallucination” differ from standard LLM Hallucination?

In a multi-agent system, hallucination can be compounded—Agent A passes a false fact to Agent B, who treats it as truth. In 2026, the orchestrator prevents this through “Consensus Verification.” You technically design the system so that Agent C must verify the output of Agent A before Agent B is allowed to act on it. This “Multi-Agent Audit” is the gold standard for technical reliability in the 2026 workplace.