In 2026, the “robot boss” is no longer a metaphor. Landmark regulations and new federal guidelines have granted workers unprecedented rights to audit the algorithms that monitor, score, and discipline them.

By early 2026, the concept of a traditional human manager has undergone a technical transformation, replaced in many sectors by Algorithmic Management systems. These platforms, often colloquially referred to as “Bossware,” have moved beyond the invasive screen-tracking of the early 2020s into sophisticated, multi-modal analysis. In the 2026 workplace, algorithms now monitor everything from the “sentiment” of your professional communications to the specific latency of your decision-making processes. For the modern professional, understanding the technical logic behind these systems is no longer optional; it is a prerequisite for maintaining autonomy and career stability in an automated hierarchy.

The primary driver of this shift in 2026 is the need for operational scalability. Human managers are technically incapable of processing the massive data streams generated by remote, AI-augmented teams. Consequently, algorithms are tasked with “optimizing” human labor, assigning tasks based on real-time efficiency scores and predicting burnout before the employee even recognizes the symptoms. However, this level of oversight has birthed a new technical challenge: the “Black Box” management problem, where employees are penalized or promoted based on calculations that remain invisible to them.

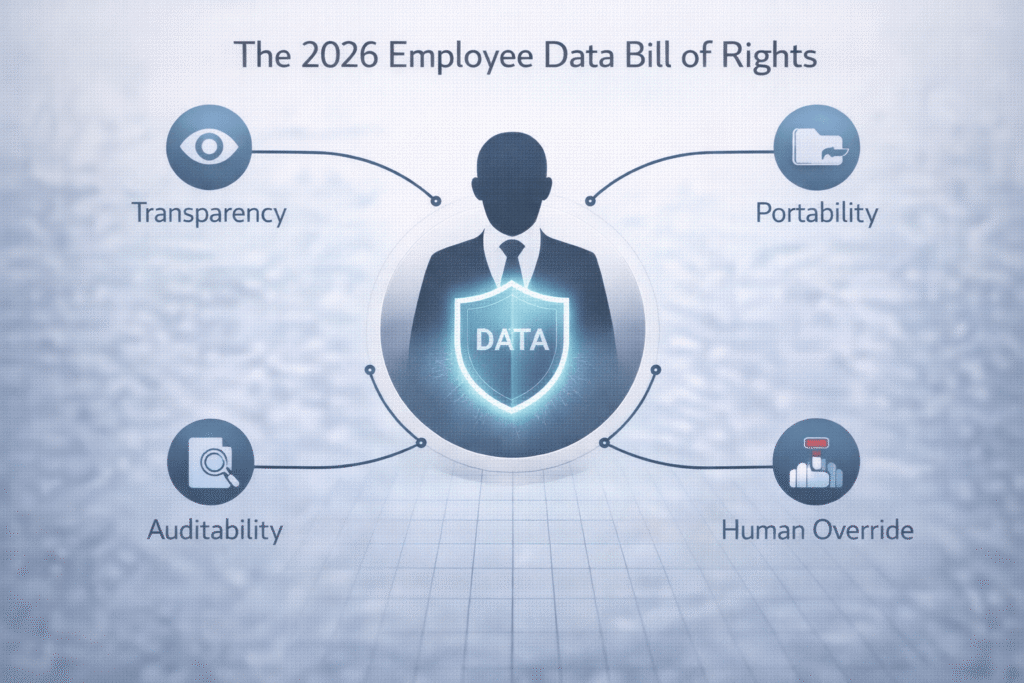

Fortunately, the legal landscape of 2026 has adapted through the implementation of comprehensive Data Sovereignty Rights. These regulations require that any algorithmic system affecting a worker’s livelihood must be transparent, auditable, and, most importantly, explainable. The 2026 professional must now view themselves as a “Data Governor” of their own career, utilizing these new legal tools to ensure that the “Invisible Supervisor” remains a fair and accurate evaluator of their contribution, rather than a flawed automated judge.

The New Bill of Rights: Data Sovereignty and the “Right to Explanation”

In 2026, the power dynamic between employer and employee is mediated by the “Right to Explanation.” This technical mandate ensures that if an algorithm determines your performance score or recommends a disciplinary action, you have the right to receive a human-readable breakdown of the data inputs used to reach that conclusion. This prevents companies from hiding behind “proprietary AI logic” to justify arbitrary management decisions. Understanding how to exercise this right is the first line of defense for the 2026 workforce.

The 2026 Data Sovereignty framework is built upon four technical pillars that every professional should master:

- Transparency of Input: The right to know exactly what data points (e.g., Slack tone, GitHub commits, webcam eye-tracking) are being analyzed.

- Algorithm Auditability: The ability to request a “Bias Report” to ensure the management AI is not technically discriminating based on age, gender, or cognitive style.

- Data Portability: The technical right to “export” your verified performance data when leaving a company, allowing you to carry your “Productivity Credit” to your next role.

- Correction Mechanism: A standardized process to challenge and correct “Dirty Data”—false signals that could unfairly lower your organizational standing.

Furthermore, these rights extend to the metadata of your labor. In 2026, your “digital exhaust”—the trail of data you leave while working—is technically considered your intellectual property in many jurisdictions. This shift has forced companies to treat worker data with the same level of security and respect as customer data. For the career strategist, this means your “Performance Log” is now a valuable professional asset that can be used to negotiate better terms or prove your ROI with mathematical precision.

Finally, the 2026 sovereignty rules require a “Human-in-the-Loop” (HITL) override. No algorithm is technically allowed to terminate an employee without a human review of the underlying data. This ensures that the final decision remains an act of human leadership rather than a statistical execution. As we navigate 2026, the most successful professionals are those who proactively monitor their own “Sovereignty Reports,” ensuring that their digital twin aligns with their actual professional output.

Technical Resilience: Auditing Your Own Bossware Data

Technical resilience in 2026 starts with Self-Auditing. Professionals are increasingly using their own “Personal Analytics” tools to cross-reference the data collected by their employers. By maintaining your own log of tasks, outcomes, and time-investment, you create a “Shadow Audit” that can be used to challenge algorithmic errors. This practice is essential for preventing “Feedback Loops” where a minor technical glitch in a tracking software leads to a downward spiral in your performance rating.

This level of vigilance is part of a larger trend known as Data Unionization. In 2026, groups of employees are pooling their anonymized management data to identify “Systemic Algorithmic Bias.” If a specific department is consistently receiving lower scores due to a flawed “Efficiency Formula” in the company’s Bossware, the data-backed evidence allows the team to demand a technical recalibration of the supervisor algorithm. This is the 2026 version of a collective bargaining agreement.

In 2026, your data is your testimony; if you do not audit the algorithms managing your career, you are essentially allowing a machine to write your professional history without a proofreader.

The 2026 professional must also be wary of “Biometric Creep.” Some advanced bossware systems now attempt to analyze heart-rate variability or micro-expressions to gauge “Engagement.” While companies claim this is for “Wellness Support,” the technical reality is that it often serves as a proxy for surveillance. Exercising your right to Opt-Out of non-essential biometric tracking is a key strategic move for maintaining your data sovereignty and personal boundaries in a hyper-connected environment.

The Economics of Performance Scores: From KPI to Credit

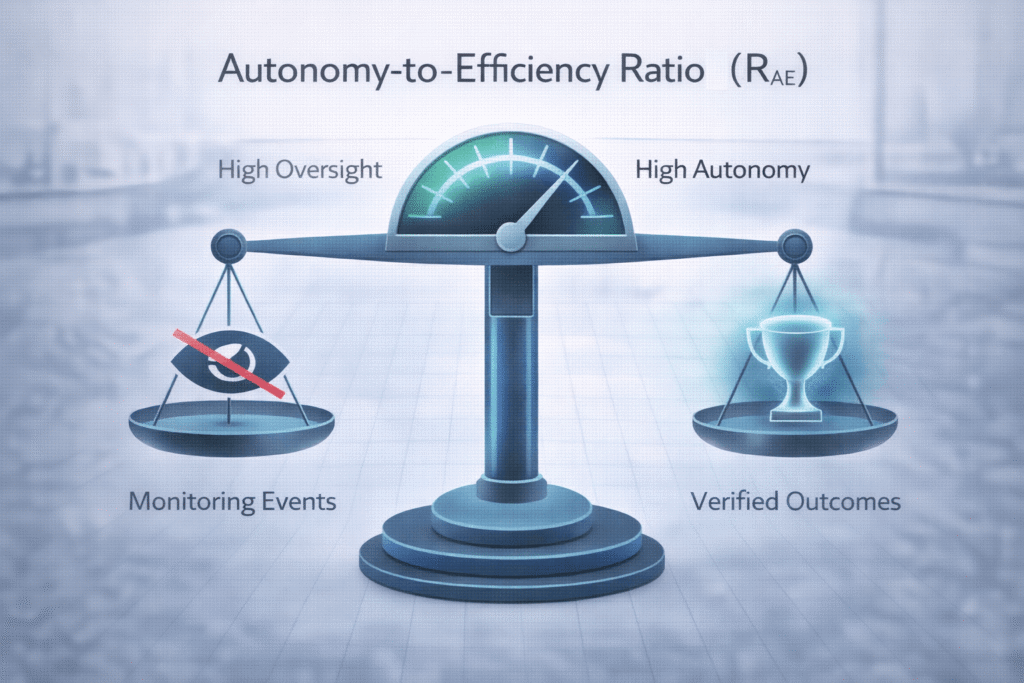

In the 2026 economy, your performance score is no longer just a figure for your annual review; it is a form of Career Credit. High-trust organizations use these scores to automate “Autonomy Grants,” where top-performers are technically exempted from invasive monitoring protocols as a reward for consistent output. This creates a technical hierarchy where “Sovereignty” is earned through verified data, allowing the best workers to operate in a high-trust, low-surveillance environment.

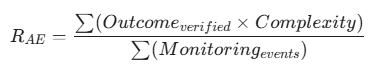

The technical measure used to determine these grants is the Autonomy-to-Efficiency Ratio (RAE). In 2026, orchestrators use this formula to justify reducing the frequency of algorithmic checks for specific employees:

A high RAE indicates that an employee delivers complex results with minimal supervision, technically proving that additional bossware is redundant and counterproductive. By focusing on this ratio, the 2026 professional can turn the “Invisible Supervisor” into a partner, using the algorithm’s own data to prove they deserve more freedom. This “Optimization of Autonomy” is the hallmark of the elite 2026 career path.

Future-Proofing: Negotiating Your Digital Privacy in 2026

Negotiating your contract in 2026 must include a “Digital Privacy Addendum.” It is no longer enough to negotiate salary and equity; you must negotiate the parameters of your surveillance. This includes defining what data can be collected, how long it can be stored, and who has the technical rights to the “derived insights” generated by the management AI. In 2026, a “High-Sovereignty Contract” is the new status symbol of top-tier talent.

Furthermore, you must secure your “Right to Disconnect” in the algorithmic sense. This means ensuring that the management software is technically programmed to “Stop Tracking” at the end of your shift. In an era of always-on connectivity, the technical air-gapping of your personal and professional data is a vital strategy for long-term mental health and career longevity. The 2026 professional is not a 24/7 data point; they are a strategic asset that requires periods of “unmonitored recovery” to remain at peak performance.

FAQ: Protecting Your Digital Career Footprint

Can an employer technically use my “Sentiment Analysis” data to fire me in 2026?

While companies use sentiment analysis to flag “toxic” or “disengaged” environments, firing someone solely based on an AI’s interpretation of their “tone” is technically high-risk for the employer. Under 2026 labor laws, you can challenge this by demanding a Contextual Audit. If the AI misidentified professional “frustration” with a broken process as “disloyalty,” the company is technically required to reverse the decision if no other performance issues exist.

What happens to my management data after I leave a company in 2026?

The 2026 “Right to be Forgotten” for employees ensures that you can request the permanent deletion of your granular monitoring logs (e.g., every keystroke or gaze-track) while retaining your verified “Performance Certificates.” This technical separation ensures that your past “surveillance history” does not follow you to your next role, while your “Achievements” remain part of your portable professional record.

Are “AI Performance Checks” biased against neurodivergent workers?

This is a major technical concern in 2026. Algorithms that measure “typical” productivity patterns or “ideal” communication styles can unfairly penalize neurodivergent professionals. In 2026, you have the right to request a “Reasonable Algorithmic Accommodation,” where the system’s parameters are technically adjusted to account for your unique working style, ensuring a fair and equitable evaluation.

How can I tell if my company is using “Secret Bossware” in 2026?

Technically, “Secret Bossware” is illegal under the 2026 Transparency Acts. Companies are required to provide a Disclosure Ledger listing all active monitoring software. If you suspect hidden tracking, you can use “Packet Sniffers” or “Privacy Auditing Apps” to detect unauthorized data transmissions from your work hardware. Finding unlisted surveillance is a major compliance violation that provides significant legal leverage for the employee.

What is the “Data Sovereignty Portability” standard?

By 2026, most PHAs (Professional Housing/Career Agencies) have adopted a standard for Portable Performance Credentials. This allows you to take your “Efficiency Scores” and “Reliability Ratings” from one company and “Plug” them into the hiring portal of another. It technically reduces the “trust-building” time in a new role, as you arrive with a verified, cryptographic proof of your 2026 work history.

Can my employer monitor my “Off-the-Clock” activity via company-issued devices?

In 2026, the technical standard is the “Dual-Profile” architecture. Your work hardware must have a technical partition that “goes dark” during personal hours. If a company is caught scraping data from your “Personal Partition” or monitoring your location outside of work hours, they face massive fines. Protecting this boundary is a key part of your 2026 sovereignty strategy; if the “Glow” of the work profile stays on after hours, it’s a technical bug or a compliance breach.